5 Dimensions of AI Proficiency

Our assessment evaluates the complete lifecycle of working with AI, from knowing when to use it, through getting results, to delivering work you can stand behind.

AI Task Strategy

Knowing when to use AI — and when not to. Can they assess a task and decide what to delegate versus keep human?

Prompting & Interaction Quality

Getting results from AI tools. Can they write effective prompts and iterate when the first attempt doesn't land?

Critical Evaluation & Validation

Spotting what AI gets wrong. Can they catch errors, hallucinations, and gaps before those reach real work?

Ethical & Responsible Use

Using AI without creating risk. Do they understand privacy, bias, IP, and regulatory boundaries?

Workflow Integration & Output Quality

Turning AI output into professional work. Can they edit, synthesise, and combine AI content with original thinking?

Built for Talent Decisions. Designed by Science.

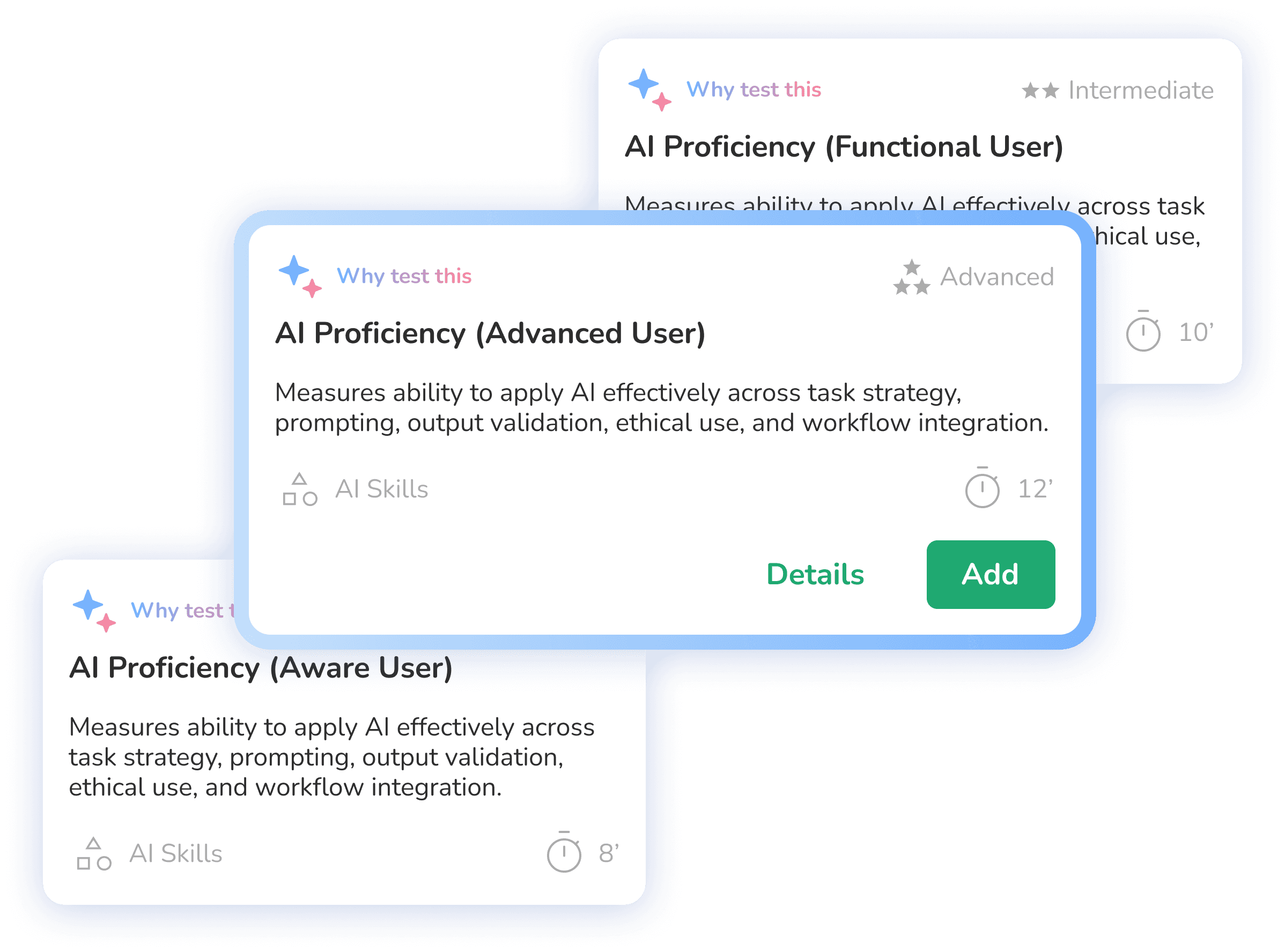

Step 1: Choose the proficiency level

Pick the proficiency level the role requires: Aware, Functional, or Advanced. Each level has its own scenarios matched to the AI competency expected, whether you're screening a new hire or benchmarking a current employee.

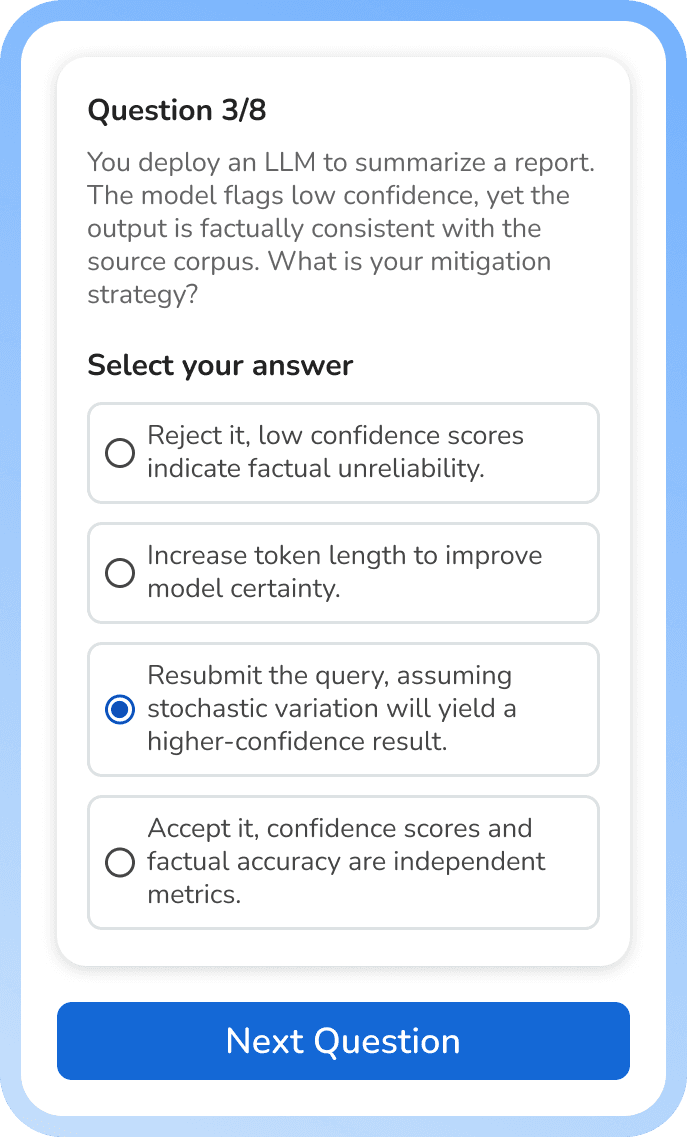

Step 2: Candidates complete realistic scenarios

No trivia questions. No textbook definitions. People work through realistic business scenarios that test how they actually approach AI-assisted tasks. Takes about 15 minutes.

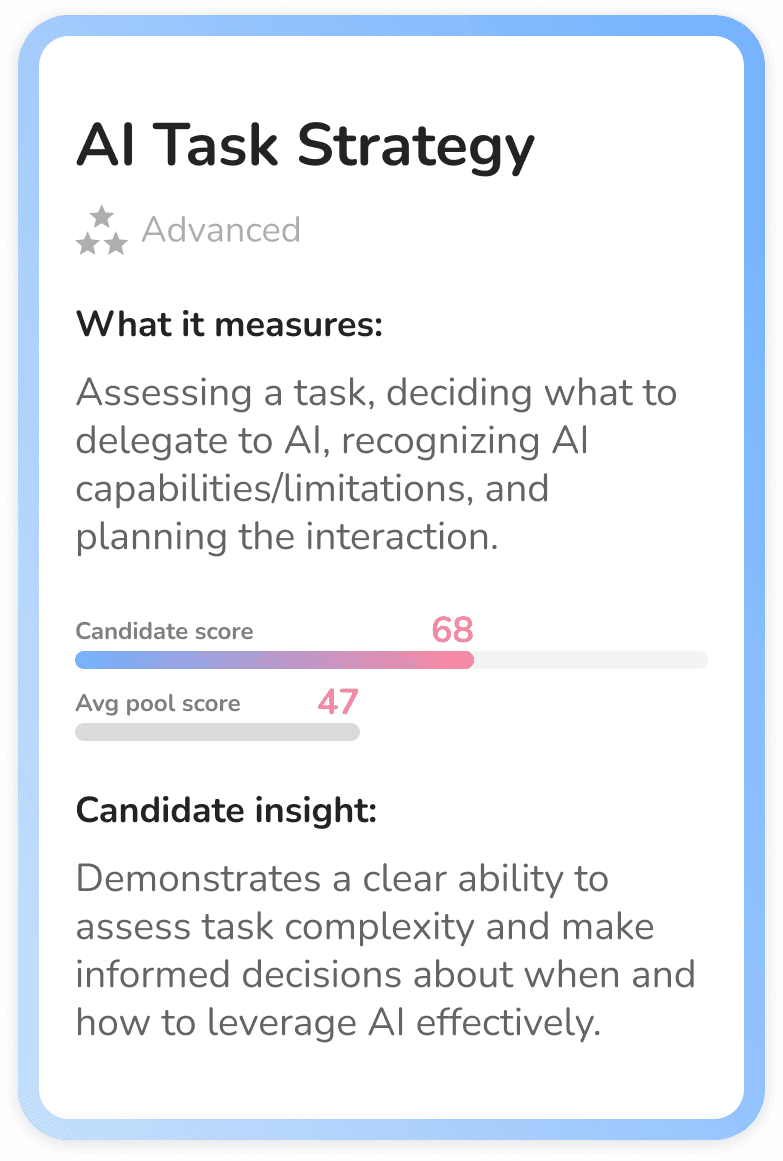

Step 3: Get dimension-level insights

Get a detailed proficiency profile with scores across all five dimensions, 0 to 100. See exactly where each person excels and where the gaps are. No pass/fail binary. Use it to make a hiring call, plan targeted upskilling, or both.

Not Another AI Quiz

The market is full of AI assessments. Most test what candidates know about AI. We measure what they can actually do with it.

COMPETITORS

Developer-focused tools

Test ML engineering, model deployment, and coding skills. Great for hiring data scientists. Irrelevant for 95% of your workforce.

AI literacy quizzes

Test whether someone can define "hallucination." Knowing the vocabulary doesn't mean they can do the work.

Tool-specific assessments

Test proficiency with one product. That product updates next quarter. The skills become obsolete.

BRYQ

Bryq AI Proficiency Assessment

Measures how people actually work with AI in realistic scenarios. Tool-agnostic. Role-universal. Built on peer-reviewed research.

Built on Science,

Not Guesswork

Our competency framework synthesises six peer-reviewed research sources spanning international government frameworks, academic competency models, and policy-grade workforce research.

Each dimension is grounded in established AI literacy and digital skills scholarship — not invented in a product sprint.

The result: a measurement instrument built on the most rigorous AI proficiency research available, validated through established psychometric methods.

Match the Assessment to the Role

Works for pre-hire screening, internal benchmarking, and annual AI readiness audits.

Aware

For roles where basic AI awareness is sufficient. Can the candidate use AI tools for simple tasks, understand their limitations, and avoid common pitfalls?

Functional

For roles where regular AI use is expected. Can the candidate effectively prompt AI tools, evaluate outputs critically, and integrate AI into standard workflows?

Advanced

For roles requiring sophisticated AI application. Can the candidate design complex AI workflows, evaluate AI opportunities strategically, and guide others in responsible adoption?

FAQ

Find answers to the most frequently asked questions about Bryq